Full Duplex Communication System for Hearing-Impaired Users (Bachelor's Final Year Project)

[GitHub] [Paper] [Full Report]

Overview

This project presents a bi-directional (full-duplex) communication system designed to bridge the gap between hearing-impaired individuals and the general public. It translates spoken English into visual Indian Sign Language (ISL) gestures in real-time, while simultaneously converting ISL gestures from live video back into audible speech.

The Problem

Communication barriers exist due to a shortage of qualified ISL interpreters and general awareness. This can lead to social isolation and reduced access to information. Our project addresses this challenge by providing a technological solution that serves as a low-cost, accessible communication aid.

Approach & Technical Details

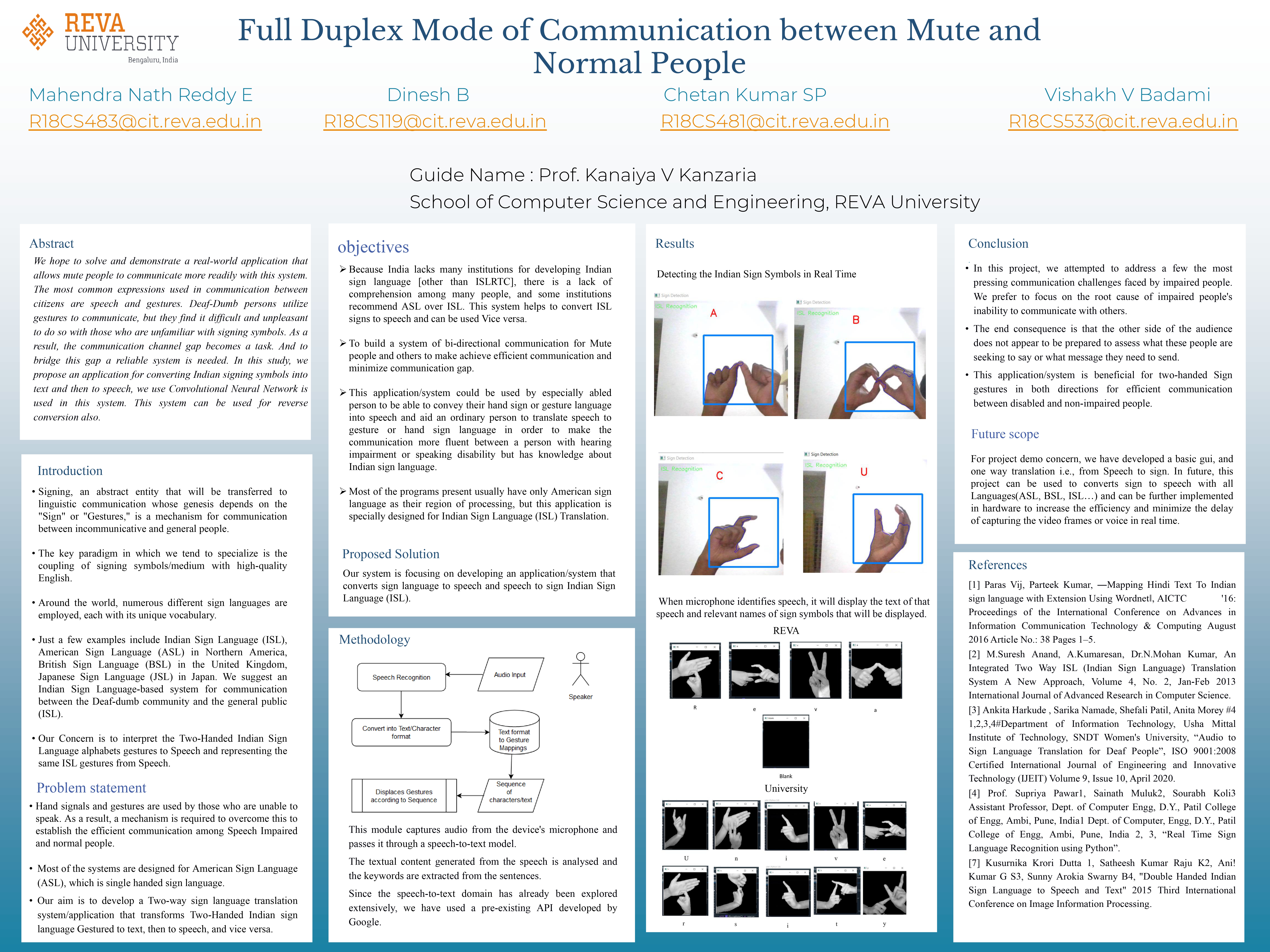

- Speech-to-Sign Translation Module: Captures spoken English using a microphone, converts audio to text via Google Speech Recognition API, and displays corresponding ISL gestures.

- Sign-to-Speech Translation Module: Uses a webcam to capture ISL gestures. OpenCV processes the video, and a CNN (TensorFlow & Keras) classifies gestures into letters, which are then converted to speech using gTTS.

Key Technologies: Python | TensorFlow & Keras | OpenCV | Google Speech Recognition API

Results & Outcomes

- High Accuracy: Gesture recognition CNN achieved ~99% accuracy on the validation dataset.

- Functional Prototype: Both modules integrated into a real-time, bi-directional communication loop.

- Impactful Solution: Demonstrates a scalable, accessible alternative to human interpreters for basic communication.